Here’s a scary headline: Michigan Democrats Look to Change Teacher Evaluation System.

Not so much the “Democrats” part—although I’d argue that not having a clue about evaluating teachers is common in both parties—but the implication that way fewer than 99% of public school teachers are doing acceptable work:

Consider: During the 2021-2022 school year, 99 percent of Michigan teachers were ranked either highly effective or effective on evaluations.

State Rep. Matt Koleszar, D-Plymouth, chair of the House Education Committee, told Bridge Michigan the state’s teacher evaluation system often leads to school administrators “checking a box” as they monitor teachers rather than using the process to help struggling teachers improve.

“I think when you have a better evaluation system and you’re supporting someone who needs that help and needs (those) resources, that ultimately is going to (filter down) to the student.”

I am decidedly NOT a fan of basing any percentage of a teacher’s evaluation on standardized test scores (it’s 40% in Michigan, under our current, Republican-developed system). And I am a true believer in the statement that teacher practice can be improved—and a good evaluation system (plus—key point—the time, trained personnel and resources to implement such a system) could help.

With so many moving parts, and the current handwringing (and bogus data) around low test scores in students emerging from a global pandemic, re-doing teacher evaluations which might be in place for decades seems precarious at the moment.

The questions, really, are: What are we looking for, in a teacher? What skills and qualities do good teachers exhibit—and are they measurable, with the tools we currently use? What outcomes are most critical for students—and what (easily measured) outcomes disappear quickly?

When the legislature can agree on answers to these questions—with input from the education community and invested parents, of course—let me know. Cynicism aside, how do we streamline teacher evaluation in ways that make it easy to capture and share expertise, help promising teachers build their practice, and excise the folks who shouldn’t be there?

There is, by the way, no shortage of ideas and research around teacher improvement; our international counterparts are already doing a better job of this. Anyone who’s looked at Japanese Lesson Study models, or meta-analyses on building effective learning environments knows this—but investing in viable teacher evaluation systems that also build capacity will not come about with a new written tool or protocol. It will take a new mindset.

Because I spent many years looking at videos of music teachers, while serving as a developer for the National Board’s music assessment, I also understand that there are limitations in evaluating teachers by observing their lessons.

For example: You have to know what the teacher’s learning goals were, going into the lesson, and have some context around who’s in the room. The core competency for nearly all teaching is knowing the students in front of you. You can’t build effective lessons without that knowledge. And that’s hard to evaluate.

I used to teach with a man who didn’t bother to learn the students’ names, because the classes were large—60 or more. His rationale was that learning names was time that could be better spent delivering content. He delivered a whole lot of content, all right, but never got great results, because there was no human relationship glue inspiring students to use that content.

Try to put that into an evaluation tool.

Dr. Mary Kennedy, one of my grad school professors, had a video library of teachers teaching. She would usually show two videos, and then ask us to compare and contrast—and roughly evaluate. One pair of videos (and discussion) that I remember:

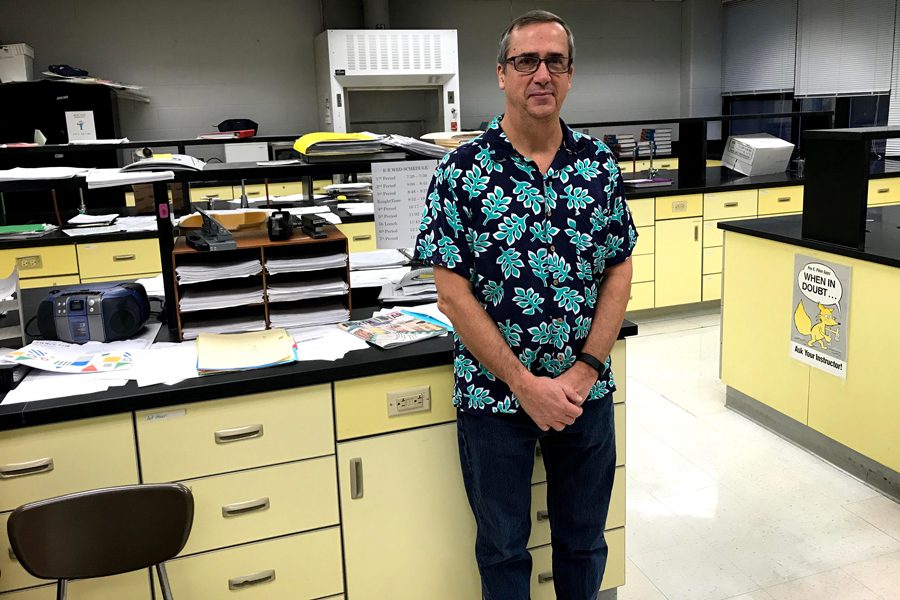

- A man in a Hawaiian shirt, cargo shorts and flip-flops is facilitating a hands-on science experiment with a half-dozen groups of middle school students, clustered around lab tables. The room is noisy as students manipulate equipment and fill out lab reports, but the teacher is wearing a mic that picks up his comments and students’ questions as he moves from table to table. Several times, when students ask a direct question, he turns it back to them—What do YOU think? Why? Once, he claps his hands and asks the entire class to re-examine the stated purpose of the experiment. There is a beat of quiet, and then students are back to talking and writing. The video picks up students who appear to be off-task, as well, looking at the camera or talking to someone at another table.

- A young woman is teaching a HS literature class. She is well-dressed and very articulate. The video begins with a Q & A exchange about the assigned reading, with a young man wearing a navy blazer and tie. The questions probe facts from the text—Who is the real victim in this chapter? Does this take place before or after the barn-raising scene and why is that important to the narrative? —and the young man has clearly done the reading, as his answers are all correct. The camera moves back and we see there are about eight teenaged boys in the class, all in blazers. She cold-calls the students, in turn, and they all answer her questions correctly. Other than the questions and short answers, the class is silent.

After watching the two videos, Dr. Kennedy asked: Which was the best lesson? Who was the best teacher? The class was vehemently divided—and remember, these were all graduate students in education. Imagine showing two similar videos to a legislator or one of the Moms 4 Liberty— then asking them to pick out the “best” teacher.

Ironically, the current quest to limit controversy and hot topics in public school classrooms makes it even more difficult to evaluate teacher practice. The best lessons—the ones that stick—are often messy and hard-won. And our best teachers—articulate, student-focused and creative—are being shut down by the very people designing their evaluation procedures.

We used to laugh at the inadequate teacher evaluation checklists—Is the teacher dressed neatly and well-groomed?—prevalent in the 1970s. But we haven’t solved the problem of how to evaluate all teachers fairly and productively. Yet.

As a crafter of Teacher Evaluation system back when I was employed, I argued (and continue to do so as a retired teacher), that “evaluation” programs are misnamed. Such systems should be quality enhancement systems, not “put a value on it” systems. All such systems should focus on creating goals that will help teachers improve their teaching. The only case for administrative action would be when a teacher makes no progress on their goals. And goals not met in a cycle need to be reframed or re-issued. Any system not based upon improvement goals is fictitious and specious to boot.

LikeLiked by 1 person

Well said, Steve. And (from the other side–those doing the “evaluating”) any time you’re filling out a form or observing a lesson that tells you nothing of value, you should ask: Who’s making me do this?

LikeLike

The reformsters love to complain that too many teachers are considered effective. What’s left out is that in most states, there’s a three year trial period in which teachers are not tenured, and can be dismissed without process by simply not being rehired. Those teachers who make it through that trial period likely know what they’re doing, even though they are still developing skill sets around content delivery, curriculum development and class management.

After a new evaluation system was set into place in my district, many veteran teachers began to get negative evaluations. Union officials found that the majority of these previously competent teachers had personal problems which spilled over into their classrooms: substance abuse, chronic illness, or intrusive life experiences such as death of a child or divorce. People don’t suddenly become incompetent for no reason.

In my own experience, I seldom had an evaluator who was well enough versed in the work I was doing to support my efforts toward improvement; that came from peers. To be fair, many administrators simply don’t have the time to spend in classrooms finding out what’s going on in there on a daily basis. Their evaluations tend to be more of a Rorschach impression based on: “I seldom / often have to step in”.

LikeLike